How we implemented continuous discovery techniques at Progression, and what we’re going to do next.

I joined Progression as Product Lead at the start of the summer at an exciting time: we’re a young startup that’s still at the crux of finding product-market fit in a crowded market. I was given a carte blanche to discover the product that takes us to the next stage. No pressure!

Everything was up for grabs — strategy, roadmap, ways of working — and the team was particularly keen to improve their product discovery practices. So I decided to implement some continuous discovery techniques, heavily informed by a re-read of Teresa Torres’ book Continuous Discovery Habits.

This article is an appraisal of how we implemented aspects of continuous discovery (CD) at Progression and how this panned out: both things that went well and continue to form the foundation of how we work, plus challenges and missteps which we’re now taking steps to rectify.

I hope it’ll help if you’re considering baking some of these practices into your team, or are just curious about how we work here at Progression.

Getting started

Here’s how we began our continuous discovery journey back in June.

Foundational research and OKRs

Just some of the many user research calls I ran. PS Dovetail is awesome

I started building up my context by speaking with around 30 users to understand their current value perceptions and biggest unmet needs — intense, yes, but incredibly valuable. I used the insights to form some early thoughts on strategic themes and opportunity areas.

We defined an OKR that encapsulated both the strategic outcome and our commitment to a continuous-discovery methodology. We had key results that explicitly covered speaking to users each week and shipping experiments as well as ones focused on metric goals.

Design sprint

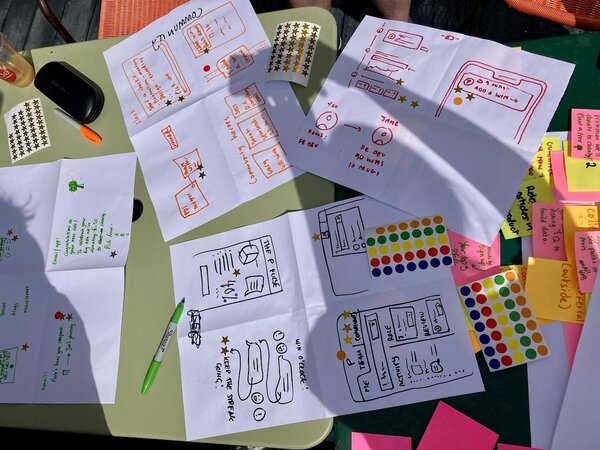

We kicked things off at an in-person all-hands. I summarised the OKR and overview of my research findings. Then I had the wacky idea to run a micro-design sprint in just half a day — yeah, that process that usually takes a whole week.

Ideas flow better with good pens and shiny stickers

Sorry for white-boarding on your table, Second Home

We split into teams, each taking an opportunity theme, and did a rapid ideation, prioritisation and sketching exercise before presenting back. Amazingly, it worked.

What made it work?

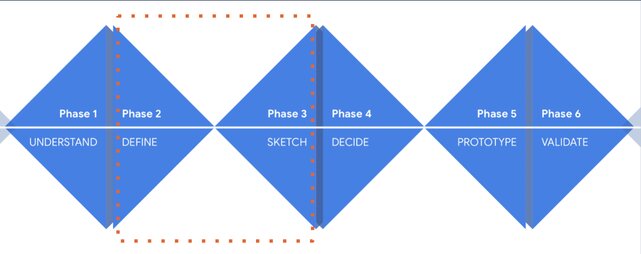

- Not doing the whole triple-diamond of a week-long design sprint: we left prototyping and validating for when the teams kicked off in earnest

- Low expectation for usable outcomes: the primary purpose was having fun defining and sketching some big ideas

- Guidance about how to structure the time and outputs: every team played back a coherent idea with great storytelling

- Whole-team involvement: non product team members participated too and it was great to see the breadth of viewpoints and ideas that resulted.

Design sprint ‘triple diamond’

Slide with suggested outcome of the micro-sprint

Forming the pods and kicking off

Pod branding and merch is crucial to success

Now it was time for the hard work! Here’s how we set the team up for continuous discovery success.

Team setup

We split our team of two designers and four engineers into two ‘pods’, each one owning an opportunity theme. We’re product-manager-light at the moment, so decided that our engineering lead Sam and I would ‘float’ between pods (more on this later).

Process and cadence

I introduced a lightweight process for the pods, as recommended in the book. This consisted of a loose four-week plan of discovery and experimentation activities, with ‘show and tell’ playbacks each Friday to keep everyone in sync.

We were careful to keep this light-touch, in recognition that CD is a practice rather than a playbook. We wanted to give autonomy and ownership to the pods to decide for themselves how they worked.

Idea-generating and refining

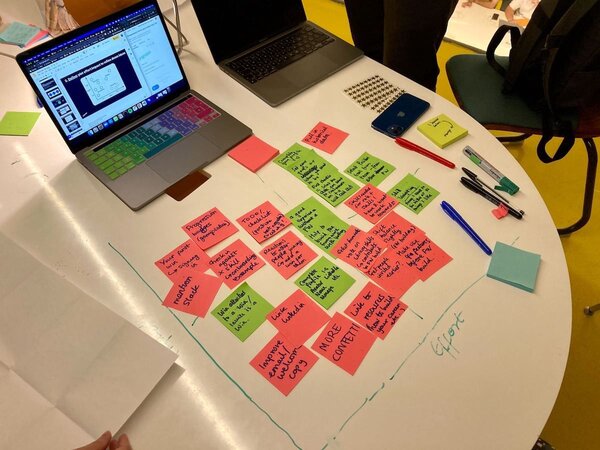

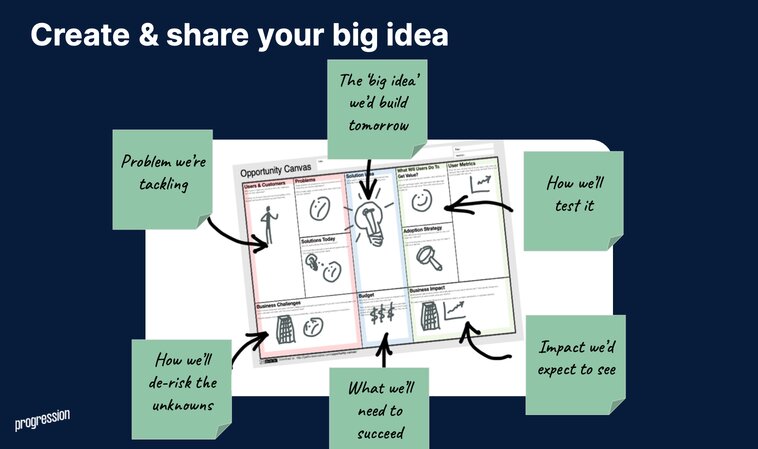

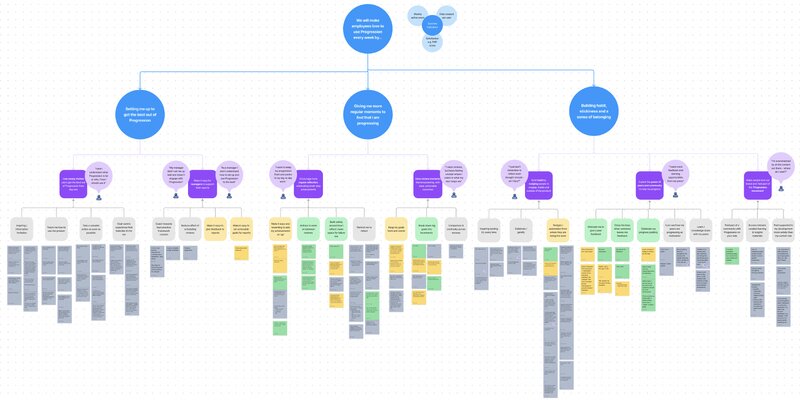

The first pod activity was to collate the ideas from the design sprint day into an opportunity-solution tree, which we did across both collaborative and solo sessions. This was a chance to smoke out even more ideas, but crucially to start rationalising and contextualising them all under the problem or user need we thought they would solve.

This technique, central to the book’s process, proved a really useful artefact for the pods to start building up their initial exploration backlogs, with a hypothesis for the opportunity each idea was striving to meet.

A big tree of opportunities

User tester recruitment

Continuous discovery relies on tight feedback loops with users. So we put out a bat-call to our customer base to form a beta testers group that each pod could use to validate their ideas at every stage. We created a Slack group and Intercom segment to stay in touch with them throughout.

✅ Pods

✅ Ideas

✅ Users

We had everything we needed to get started. That was eight weeks ago now, so how did it all pan out?!

Wins

Here’s some of the things that paid back positively that we want to keep up.

Team enthusiasm and buy-in

Classically, one of the big blockers to implementing a CD practice is getting buy-in from the rest of the business. Luckily the Progression leadership team was very open to giving something new a try, and everyone was sold into the potential benefits.

The team similarly took to trying the new approach with positivity and adaptability. So many people jumped outside of their usual roles to fill gaps in the team makeup and do whatever needed doing. The momentum and energy felt great!

Maintaining focus

Another classic challenge with CD is that the team’s backlog gets overwhelmed with BAU, tech debt, bugs and customer requirements. Capacity and focus ends up being taken away from the CD cycle and the whole thing falls apart.

Happily, this didn’t happen. We agreed early on that we couldn’t move every needle at once and team focus was largely protected on the product goal. One particularly helpful activity was running a firebreak sprint before kicking off, which let us wrap up previous work and start with a clean slate.

Small-batch, learning-oriented delivery

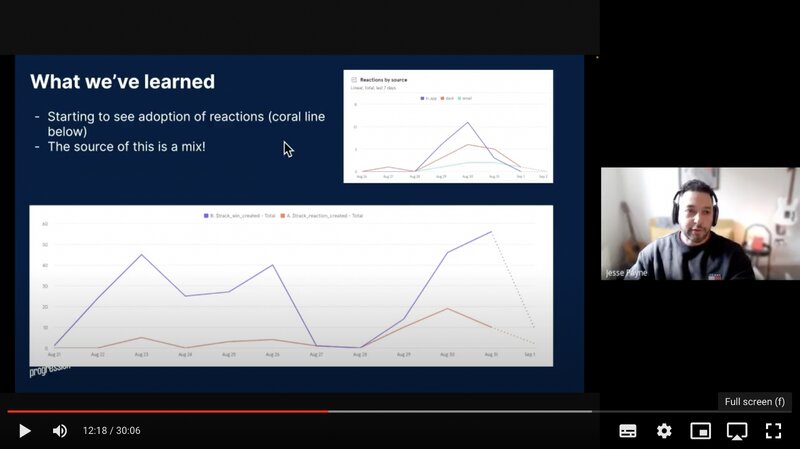

Designers and engineers committed hard to the CD approach of shipping small and lean to learn. The first few weeks were a flurry of quick wins, low-to-no design experiments and showing users work in progress. The pods went deep on understanding their opportunity space and were getting excited about defining what to ship as a result.

Our designer Jesse sharing some results in a ‘Pod Pulse’ meeting

Another good decision was to invest early in self-service experimentation infrastructure. We set up Mixpanel segments and dashboards and implemented Flipper feature-flagging, so anyone outside engineering could rapidly toggle features to beta or production. Having this instrumentation in place early on really helped us stay on top of shipping and learning velocity.

Checkpoints and accountability

We scheduled weekly checkpoints for the first four weeks to keep a regular pulse on how things were going and what the pods were learning. I could act quickly on any concerns or blockers raised, tweaking techniques, processes or goals ready for the next week’s activities.

We leveraged Progression’s company values of ownership and transparency by continuously challenging each other on whether the approach was working. We embedded a ‘question bank’ into our catch-up meeting Notion template, which we could dip into to help us all query if we could be doing anything differently. This often led the pods to re-assess if they could learn by doing an experiment or research session instead of building something, as well as checking their ideas were really going to move the intended objective. Questions included are:

- How could we learn the same thing faster?

- What decisions are we delaying? Do we have to?

- Is this the best way to test? (interview vs testing vs code vs design)

- Is this idea a 10% or 10x improvement?

- How will we know when this is done?

- How might we do this in half the time?

- How can we unblock you right now?

- Is this the most important thing to work on right now?

Challenges

Once we were a few weeks in we were able to identify some repeatedly-raised challenges and concerns with how things were going. This has led to some changes we’re rolling into next quarter and beyond.

Here’s where we started seeing the cracks.

Unsustainable team setup & pace

Our retrospectives revealed that the engineers sometimes felt unproductive or unhelpful because they weren’t confident in delivering with low design or participating in research and ideation sessions (though in reality we had so many great ideas from the engineers!). The pods were also struggling to meet up regularly, make decisions and organise user insight sessions: the weekly cadence we set to keep pace on velocity felt dizzying.

These feelings were perhaps a symptom of delegating too much ownership too soon — especially as we didn’t have a dedicated product manager per pod, so the discovery ‘triage’ recommended in the book was missing a crucial role. This was a good learning in the balance between asking people to be adaptable and autonomous vs covering roles which aren’t their specialism or skillset. It led to me taking a more hands-on product manager role to support the teams.

Neglecting whole-product design exploration

I found that with CD our thinking often got constrained to ‘dreaming smaller’ because you need to start somewhere lean to get a learning. Our designers suffered the pressure of visioning whole new experiences for the product, while also putting together MVP UX/UI for experiments and iterations we could ship quickly. We ended up shipping some features that didn’t initially land the intended learning outcome because they felt too MVP or didn’t make sense in the product as a whole.

Once we realised this we started thinking about ‘hook loops’, where the whole user experience — from trigger, to action, to reward and further investment — is considered, designed and prioritised. Delivering this entire loop is higher effort, but we started getting higher adoption and better quality feedback on the beta features as a result. Taking this ‘whole-feature’ approach to solve our opportunities started feeling more suitable to our current stage of our product (more on this below).

Learning from customers in a fast-changing B2B product

Most interestingly, I feel that the book glosses over discussing the kinds of company, stage or sector the CD approach might not work as well for, and wonder if it’s not a fits-all solution.

For context, Progression is a B2B Saas company at seed fundraising stage. We have healthy MRR and a stable customer base, but have a lot of ambition for expanding our product vision in the coming months to hit scalable growth and wider product-market fit.

I see two challenges with making CD effective in our scenario:

- Ability to get rapid meaningful insight from a smaller user base. Our beta group were less engaged than we’d have liked (perhaps unsurprising for B2B users) and our relatively low-volume user base did not provide significance in usage analytics. Despite our best efforts to engage and be bold with experiment releases, we weren’t able to get a flow of rapid, high-quality quant and qual insight to validate our assumptions at the velocity we hoped.

- Validating ideas that require behaviour or usage change. It was hard to test our more game-changing ideas with existing customers as their expectations and use cases for the product are already embedded. For example, Progression is currently mainly used for quarterly check-ins, so there is little expectation to use us more habitually. We can’t say that experiments to get people engaging more often necessarily failed, but perhaps indicate that encouraging whole new behaviours takes a longer time to pay off than an ‘iterate/optimise’ type goal, or are best tested with new cohorts who lack these embedded expectations.

What we'll do going forward

All this in mind, I’ll finish up by highlighting some of the changes we made to account for these continuous-discovery challenges within our current scenario.

Shipping and learning from more users, earlier.

To circumvent the lack of rapid learning from the beta group, we started to ship experiments to larger segments of users more often to get more immediate and significant data back. We also leveraged additional insight sources — we like Maze for their range of feedback tools and the ability to target tailored segments that mirror our intended audience.

Getting insight from prospective customers, rather than (or in addition to) current ones.

Speaking to new users, who lack the embedded expectations of our current user base, provides insights that feel bolder and more visionary — more aligned to finding PMF than iterating what we have. We can validate with tactics like marketing pages, fake-doors and design mockups, which also means we need to build very little to support a rapid learning cycle.

Maintaining the continuous-discovery mindset, but having bold conviction in our vision.

Over the quarter we’ve solidified our product vision and have a clearer than ever picture of what our future opportunity space might be. We have a strategic roadmap of solid ideas, informed by insight gained over the quarter, that we believe will make our product hit PMF and get us to the next stage. We’re falling into a healthier rhythm that gives product the time to validate before we build, design the space to be more rigorous in execution, and engineering the pace to balance speed with quality.

But we won’t get complacent. We’ll keep up the habits of smoking out our assumptions, challenging our instincts, and seeking learnings early and often to help us assess if we’re succeeding.

I’ll let you know how it’s going in another six weeks.

Resources

Useful tools

Useful reading

- Continuous Discovery Habits (Teresa Torres)

- Design sprint (Google Ventures)

- Firebreak sprint (gov.uk)

- Hook Loops (Nir Eyal)

P.S. At Progression we’re defining what we think the future of career-confidence looks like. I’m biased but I think it’s pretty exciting. You can follow along on our Headway changelog, and I’m always up for a chat.